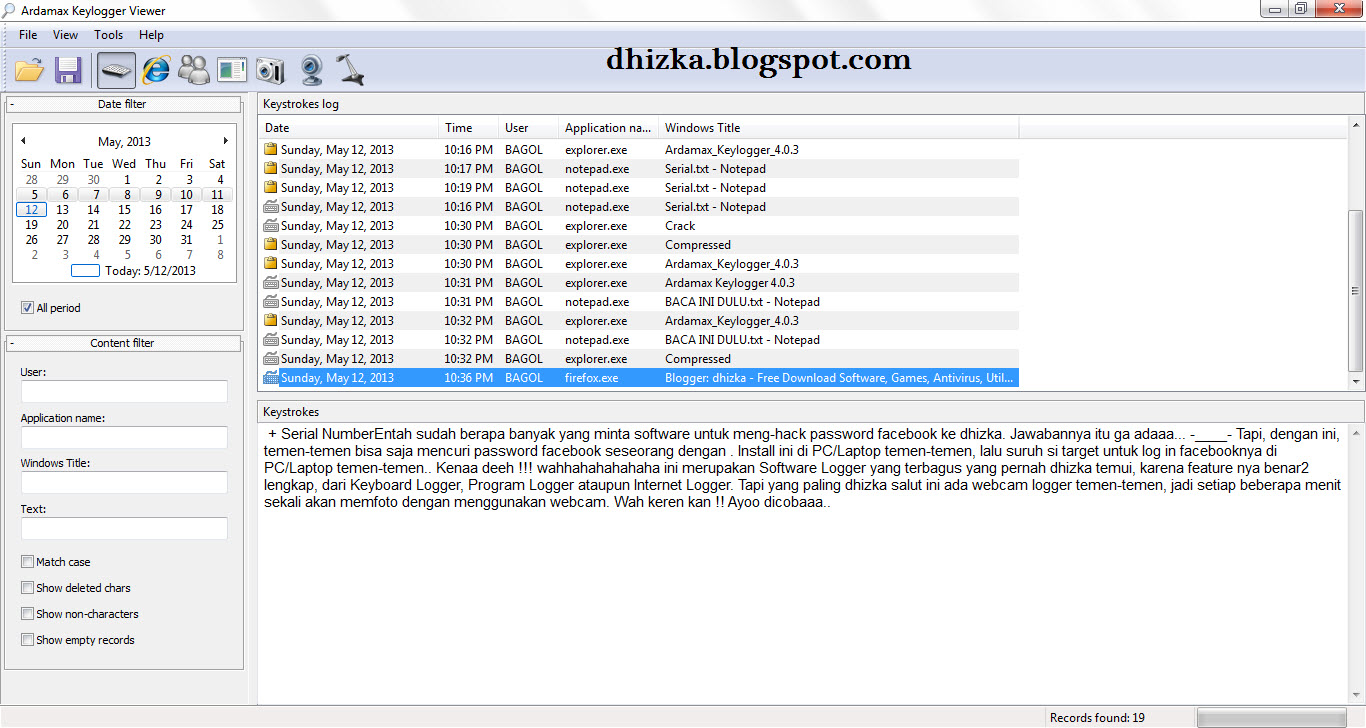

Trace 'p:c:nanosleep(struct timespec *req) "%d sec %d nsec", req->tv_sec, req->tv_nsec' Multi Tools: User Level Dynamic Tracing # Trace the libc library function nanosleep() and print the requested sleep details: # Summarize the latency (time taken) by the vfs_read() function for PID 181: # Frequency count stack traces that lead to the submit_bio() function (disk I/O issue): # Summarize tcp_sendmsg() size as a power-of-2 histogram:Īrgdist -H 'p::tcp_sendmsg(struct sock *sk, struct msghdr *msg, size_t size):u32:size' Trace 'do_nanosleep(struct hrtimer_sleeper *t, enum hrtimer_mode mode) "task: %x", t->task'Īrgdist -C 'p::tcp_sendmsg(struct sock *sk, struct msghdr *msg, size_t size):u32:size' # Trace do_nanosleep() with the task address (may be NULL), noting the dereference: Trace 'do_nanosleep(struct hrtimer_sleeper *t, enum hrtimer_mode mode) "mode: %d", mode' # Trace do_nanosleep() mode by providing the prototype (no debuginfo required): # Trace do_nanosleep() kernel function and the second argument (mode), with kernel stack traces: # Trace the return of the kernel do_sys_open() funciton, and print the retval: # Same as before ("p:: is assumed if not specified): # Trace file names opened, using dynamic tracing of the kernel do_sys_open() function: Multi Tools: Kernel Dynamic Tracing # Count "tcp_send*" kernel function, print output every second: # Trace commands issued in all running bash shells: # Trace details and latency of resolver DNS lookups: # Sample stack traces at 49 Hertz for 10 seconds, emit folded format (for flame graphs): # Trace TCP retransmissions with IP addresses and TCP state: # Trace TCP connections to local port 80, with session duration: # Trace TCP passive connections (accept()) with IP address and ports: # Trace TCP active connections (connect()) with IP address and ports: # Trace common ext4 file system operations slower than 1 millisecond: # Summarize block I/O size as a power-of-2 distribution by program name: # Summarize block I/O (disk) latency as a power-of-2 distribution by disk: # Trace file opens with process and filename: Useful one-liners using the bcc (eBPF) tools: Single Purpose Tools # Trace new processes: The summarization is all done in kernel context, for efficiency. The user space program reads this histogram array periodically, once per second, and draws the heat map. Custom eBPF programs execute when these kprobes are hit, which record timestamps on the issue of I/O, fetch them on completion, calculate the delta time, and then store this in a log 2 histogram. This uses kernel dynamic tracing (kprobes) to instrument functions for the issue and completion of block device I/O. If you are new to this visualization, see my page on latency heat maps. I added some annotations to that screenshot.

Starting with a screenshot, here's an example of tracing and showing block (disk) I/O as a latency heat map: On this page I'll describe eBPF, the front-ends, and demonstrate some of the tracing tools I've developed. For end users, you can also see my post Learn eBPF Tracing: Tutorial and Examples for product developers, see How To Add eBPF Observability To Your Product. If you are new to eBPF tracing, start at the Front Ends to understand the options. Also note: eBPF is often called just "BPF", especially on lkml. I'm not covering those here (yet, anyway). What is the stack trace when threads block (off-CPU), and how long do they block for?ĮBPF can also be used for security modules and software defined networks.Which packets and apps are experiencing TCP retransmits? Trace efficiently (without tracing send/receive).What is run queue latency, as a histogram?.Are any ext4 operations taking longer than 50 ms?.I also developed over 100 more for my book: BPF Performance Tools: Linux System and Application Observability.ĮBPF tracing is suited for answering questions like:

I've ported many of my older tracing tools to both BCC and bpftrace, and their repositories provide over 100 tools between them. If you want to program your own, start with bpftrace, and only use BCC if needed. If you are looking for tools to run, try BCC then bpftrace. The main and recommended front-ends for BPF tracing are BCC and bpftrace: BCC for complex tools and daemons, and bpftrace for one-liners and short scripts. These enhancements allow custom analysis programs to be executed on Linux dynamic tracing, static tracing, and profiling events. This page shows examples of performance analysis tools using enhancements to BPF (Berkeley Packet Filter) which were added to the Linux 4.x series kernels, allowing BPF to do much more than just filtering packets. Systems Performance: Enterprise and the Cloud, 2nd Edition How To Add eBPF Observability To Your ProductīPF binaries: BTF, CO-RE, and the future of BPF perf tools USENIX LISA2021 Computing Performance: On the Horizon

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed